Written by Cremieux Recueil.

Warm and caring mothers tend to have more successful children, but this doesn’t necessarily mean there’s an effect of warmth and care. The problem is that warm and caring mothers tend to pass on the sorts of genes that are correlated with being warm and caring. Since people with such traits are often successful in life in other ways, we should expect kids raised by warm and caring mothers to be successful for environmental and genetic reasons. The question then arises: to what degree does each of these influences matter?

In an article in Aporia published last August, Curtis Dunkel mentioned the issue of genetic confounding with respect to supportive parental behaviors. He cited a study by Roisman and Fraley:

Using a sample of twins, Roisman and Fraley examined the role of supportive parental behavior on the academic skills of young children. They found a significant association (r = .32). Consistent with the Wilson effect, they found that the shared environment not only accounted for the majority of the variance in each parental support and academic skills individually, but fully 95% of the variance in the correlation between the two variables. The remaining 5% was due to the nonshared environment, with the additive genetic component accounting for 0%. As expected, they also found that the specific variable of parental support, measured prior to kindergarten, was predictive of a child’s subsequently measured academic skills. As suggestive as the results from this study are, they do not directly address the questions of whether the effect of supportiveness is on general intelligence and whether its effect extends beyond childhood.

However, a careful reading of Roisman and Fraley’s study is less reassuring as regards the issue of genetic confounding (in this case, between parental behavior and academic skills) than that paragraph seems to suggest.

The first major issue is low reliability. A variable with high reliability will be very consistent over time since the factors it measures will be consistently present across time periods. But how do we measure parental support? Roisman and Fraley did so by observing parents with their kids until there was high interrater agreement. Their measure of supportive parenting seemed likely to be reasonably reliable when children were 24 months of age (Cronbach’s α = 0.76) and somewhat less so in pre-kindergarten (0.65). Yet when kids entered pre-kindergarten, reliability fell substantially. The same was true of their measure of intrusive parenting, which fared even worse at 24 months of age (0.66) and in pre-kindergarten (0.55).

It gets worse. The reliable variance attributable to a common construct that can be measured over time was apparently minimal. What the authors meant by parental support at 24 months of age was apparently quite dissimilar to what they measured as supportive parenting when kids entered pre-kindergarten. The correlation across the two time periods was just r = 0.32, and for intrusive parenting, it was a piddling 0.10.

In stark contrast with the results for parenting measures, early reading and mathematics scores were highly correlated with one another in pre-kindergarten and kindergarten (r’s = 0.78 and 0.83, respectively). They were also correlated quite highly across time (for these ages), with an r of 0.62.

The reason measures of academic skills capture something consistent over time is that, like other tests, they largely measure intelligence, and intelligence is consistently a major latent source of variance in performance at different ages.1 The main reason these scores correlate somewhat less strongly across ages in these early cohorts is simply measurement error being unusually high in the early years of life.

Low reliability leads to another issue.

Low power plagues the classical twin design. And as a result, it is prone to being misfit. This can happen when the shared environmental variance in the model – “C” – is constrained to equal exactly zero when it’s still apparently sizable. If the sample size is small and the confidence interval overlaps zero, the structural equation model will fit better when the variance in a phenotype that’s attributable to C is constrained to equal zero, even if the true value is greater.

Most twin studies end up with too little power, since variance decompositions require large samples to render precise results. Since we know the Wilson effect exists, we also know that at early ages, the problem will often go the other way. If genetic influence is small – and particularly if it is small at the earliest ages when measurement error is higher – then you’re more likely to fit a “CE” model than an “ACE” model, even if the ACE model is right. Indeed, many CE and AE models are the result of chasing fit statistics, regardless of their relationship to reality.

A third problem is the construct identity of supportive parenting.

The reliable variance in the parental support composite was entirely attributable to C, even in the ACE model. In other words, parents did not give more similar levels of parental support to identical (monozygotic, MZ) twins than to fraternal (dizygotic, DZ) ones. This precludes two things:

A genetic correlation between parental support and academic achievement (because there are no genetic correlations without genetic involvement).

The utility of the bivariate ACE model2 the authors would go on to fit (which doesn’t deal with differences between parents in the level of parental support).

Because 2) is precluded, the study’s estimand cannot tell us whether the relationship between parental support and academic achievement is genetically confounded. This already undermines the interpretation of their results as given in the paragraph quoted from Dunkel’s piece. Yet Roisman and Fraley’s study is problematic in other ways as well.

Another major problem with the study concerns the construct identity of residual academic achievement. In particular, it does not provide enough detail to tell us what’s going on. We don’t know how much genetic variance is contained in parental support measured at different timepoints, or what the genetic correlation was across ages. But this isn’t so important.

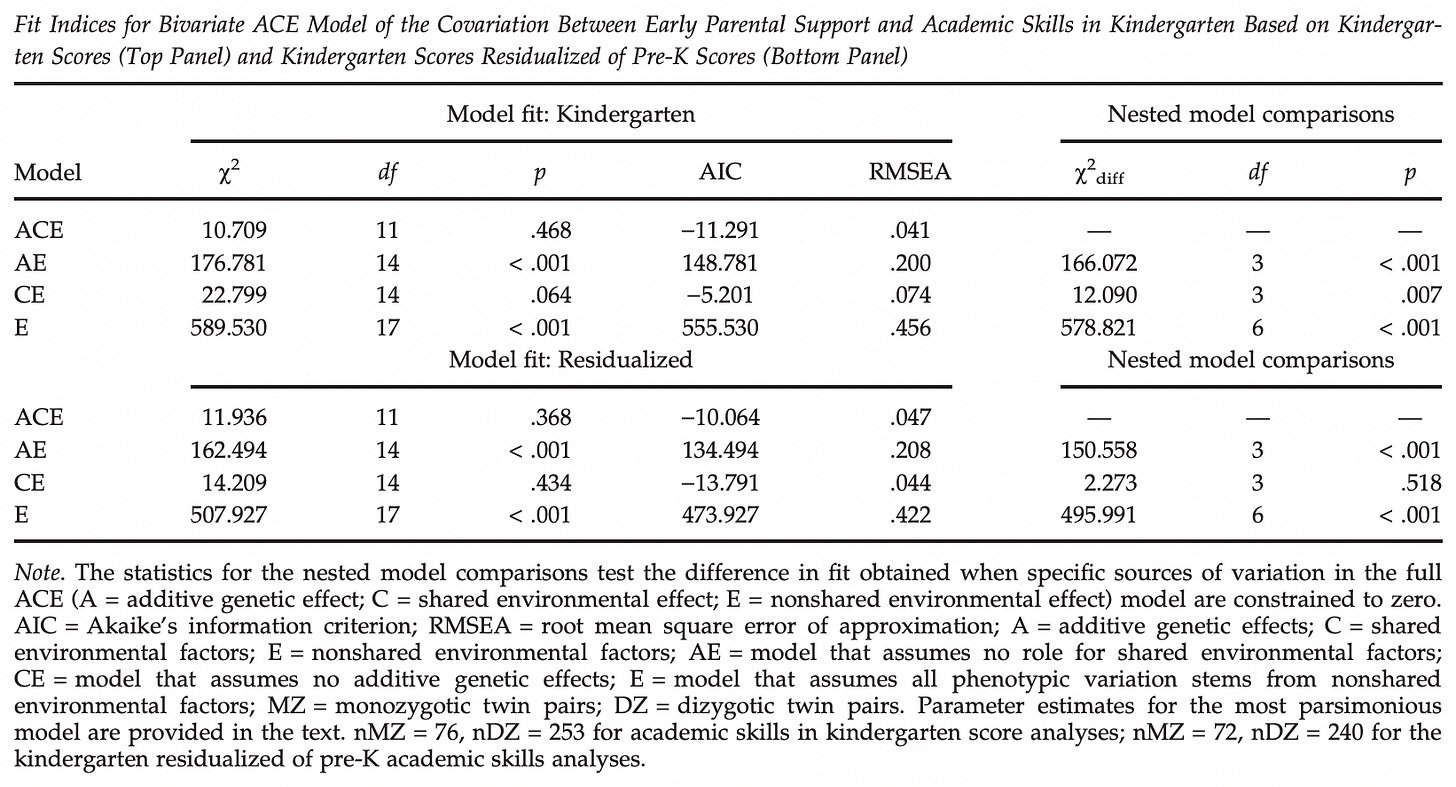

More concerningly, we don’t have the ACE breakdowns in academic achievement at different ages, and many of the measurement ages are misaligned. Nor do we have a breakdown of the genetic and environmental correlations for the different models that were fit in tables like the most important one, Table 4. This is shown below:

In the upper panel, we see results for the bivariate relationship between academic skills and parental support. We also see that an ACE model is supported (AIC = -11.291), with the next-best fitting being a CE model (-5.201). In the lower panel, we see results for the relationship between academic skills and parental support where the former has been residualized for scores in pre-kindergarten. Here, the CE model is the best-fitting (-13.791).

What exactly is the phenotype in the lower panel? If you have a measure that is reliable because it measures g consistently over time and you take away the reliable variance in the test by residualizing for an earlier measurement, you have just residualized away a lot of the g variance. Suddenly, the phenotype is no longer intelligence; it’s the residual of intelligence in the test! This is problematic because we’re now talking about parental support’s relationship with a measure of specific skills and measurement error, not intelligence per se.

Indeed, it isn’t surprising that this phenotype supported a CE model over an ACE model, since the C variance in cognitive tests is typically not variance in g. It is more often variance in specific skills. But these are the sorts of things that don’t have much predictive power, so we have less reason to care. Even if this test did have the correct estimand, it would be useless because it’s based on an indeterminate phenotype that is likely residualized of much of the variance we truly care about.

Yet another problem with the study is that it just didn’t have enough models.3

An easier way to determine whether parental support has an effect within twin pairs would be to test whether parental support predicts differences in academic achievement within pairs. This was not done, and the bivariate CE model suggests that the result would be too noisy and thus a null would be obtained. The reason the result would be noisy is that it would be reduced by the 95% of the variance attributed to C. (Since this is not a correlation attributable to A in the CE model, we know that the reduction would be similar within DZs and MZs.)

Because only five percent of the variance in this phenotype is left to work with, there wouldn’t be enough variance between twins to see if variance in parental support explains variance in academic achievement. In fact, we can use the ICC.Sample.Size R package to calculate the sample size that would be needed to determine if the residual 5% of the correlation (i.e., 0.01) is statistically significant.4

Running this tells us we need balanced groups of 78,485 twins. If, as in Roisman and Fraley’s study, the groups are unbalanced, an even larger sample would be needed. They had 72 MZs and 240 DZs. If their CE results are correct, there won’t be many samples out there that can run the necessary test.

For all the reasons I’ve mentioned – low reliability, indeterminate construct identities, and power issues aplenty – I don’t believe the study did address the issue of genetic confounding. In other words, the relationship between parental support and kids’ achievement absent genetic confounding isn’t yet known.

However, that doesn’t mean there’s no hope.

After all, the issue of genetic confounding could be readily addressed with a children-of-twins design, or any number of other designs based on measurements of the siblings or cousins of parents. Those models could decompose the A, C, and E variance in parental phenotypes, allowing us to estimate their genetic and environmental contributions to kids’ phenotypes. Adoption designs and randomized controlled trials where parents are assigned to different parenting strategies are also theoretically possible.

We need not wallow in despair over the enormous power demands of standard designs. Instead we can use a different design that’s more appropriate to answering the question. This will give us a clear answer at a much lower cost.

Cremieux Recueil writes about genetics, 'metrics, and demographics. You should follow him on Twitter for the best data-driven threads around.

Support Aporia with a $6 monthly subscription and follow us on Twitter.

Personality traits like conscientiousness and other traits like motivation and interest also provide stable variance in scores across time and place, but intelligence is the most stable, major factor.

This is a model in which two ACEs are fit simultaneously and their respective A, C and E factors are correlated with one another. It is used when estimating how much of the association between to traits is attributable to genetic versus environmental factors.

When someone fits a bivariate ACE, it’s probably better to fit a combination of models, including an ACE versus an AE model. But this problematizes path tracing rules because factors may only be relatable through other ones. A better model, which will lead to less misfit via path tracing rules, is a Cholesky decomposition. If you run a Cholesky decomposition instead of a bivariate ACE, you can (thanks to higher power) more cleanly compute the degree to which genes and environments in different phenotypes are correlated through path-tracing rules.

That would be more useful for estimating the effects of parenting in this context. After all, we’re interested in differences between parents, and we can check the correlation between kids’ (and thus parent-proxies’) genes involved in academic achievement and the C variance from parental support (which, as noted, is not necessarily attributable to C among parents). This is still a high-variance estimate, and it’s contingent on kids being good proxies for parental genes (which requires assumptions about mating and whatnot), but it’s still much closer to what we want. Unfortunately, it just wasn’t tested.

calculateIccSampleSize(p = 0.01, p0 = 0, k = 2, alpha = 0.05, tails = 2, power = 0.80)

Thanks for a great article that sheds light on one aspect of DNA trait research. Everything about DNA research is complex. I follow many facets of human DNA research. A couple of my favorites are psychometric analyses of human traits with an effort to determine genetic causes and human genetic enhancement. With a little irony, AI has already become a valuable tool in human genetic research, and someday very well may lead to the enhancement of human intelligence.

HBD to Sir Francis Galton! 🥳🎂🔔