Written by Bryan Pesta.

In 2022, intelligence researcher Bryan Pesta was fired from his tenured position at Cleveland State University. What follows is an excerpt from his new book Condemned: The Taboo Science of Race and Intelligence, which is available for purchase. (Reviews are appreciated.)

By 2018, I had published several articles on intelligence and organizational outcomes, along with others on sexual harassment, decision making and employment law. My research portfolio was eclectic but typical for a mid-career academic at a public research university.

That year, I began collaborating with researchers who were widely regarded as controversial. The controversy had little to do with technical skill and more to do with credentials and topic. They were not university employees and did not hold doctoral degrees. They studied a question that had long attracted attention and discomfort in the social sciences: why Black populations, on average, score lower on standardized intelligence tests than White populations, and whether genetics might contribute to the difference.

In 2019, we published a paper titled Global Ancestry and Cognitive Ability.1 It appeared in a peer-reviewed academic journal called Psych.2 The study analyzed restricted genetic data obtained through the National Institutes of Health’s controlled-access system.3 I applied for the access because I was the only collaborator with a university affiliation. The final line of the abstract stated, “Results converge on genetics as a potential partial explanation for group mean differences in intelligence.”

Soon after publication, a complaint was sent to university officials alleging improper use of the NIH data:

The authors offered the conclusion that, “Results converge on genetics as a potential partial explanation for group mean differences in intelligence.” Use of NIH data for studies of racial differences in this way is both a violation of the data use agreement and unethical. I am interested in knowing how this data abuse occurred—specifically who signed off on the data access request and where human subjects review occurred by a qualified institutional review board.4

The complaint did not address the study’s design, methodology or statistical analyses. Instead, it questioned whether the data could be used for this type of research at all.

The study

I believe that some questions about intelligence and group differences become difficult to pursue not because they are empirically inaccessible, but because their possible answers are socially sensitive.5 Other chapters of Condemned describe the institutional mechanisms through which inquiry can narrow—publication friction, funding incentives, and professional risk—and argue that these forces shape the literature before conclusions are openly debated.

Here I turn from general description to a concrete example: the study that ultimately led to my termination. My purpose is not to defend a conclusion, but to document how a specific piece of research was conducted, what it reported and how it was received.

Our paper examined the relationship between genetic ancestry and cognitive ability using restricted-access data from the Philadelphia Neurodevelopmental Cohort (PNC), a large community sample of children and adolescents collected through the National Institutes of Health.6

Research in this area is often criticized for small samples, indirect ancestry measures, or limited phenotyping. The PNC dataset addressed these concerns by combining dense genotyping with a standardized cognitive battery administered to thousands of participants drawn from a single metropolitan region.

The question was narrow: whether variation in genetic ancestry within a U.S. sample was statistically associated with variation in cognitive performance, and how that association compared to alternative explanations frequently proposed in the literature.

Cognitive Ability: Cognitive ability was measured using the Penn Computerized Neurocognitive Battery, a widely used assessment designed to capture general cognitive performance across multiple domains.7 Composite scores were derived from subtests following established procedures.

Prior measurement-invariance research on comparable batteries indicates that these tests measure the same latent construct across groups.8 Consistent with that literature, the results did not indicate differential test functioning between Black and White participants.

The observed mean difference between groups in this sample was 14.72 points, similar to the approximately one standard deviation gap (i.e., 15 IQ points) repeatedly reported in large datasets.9

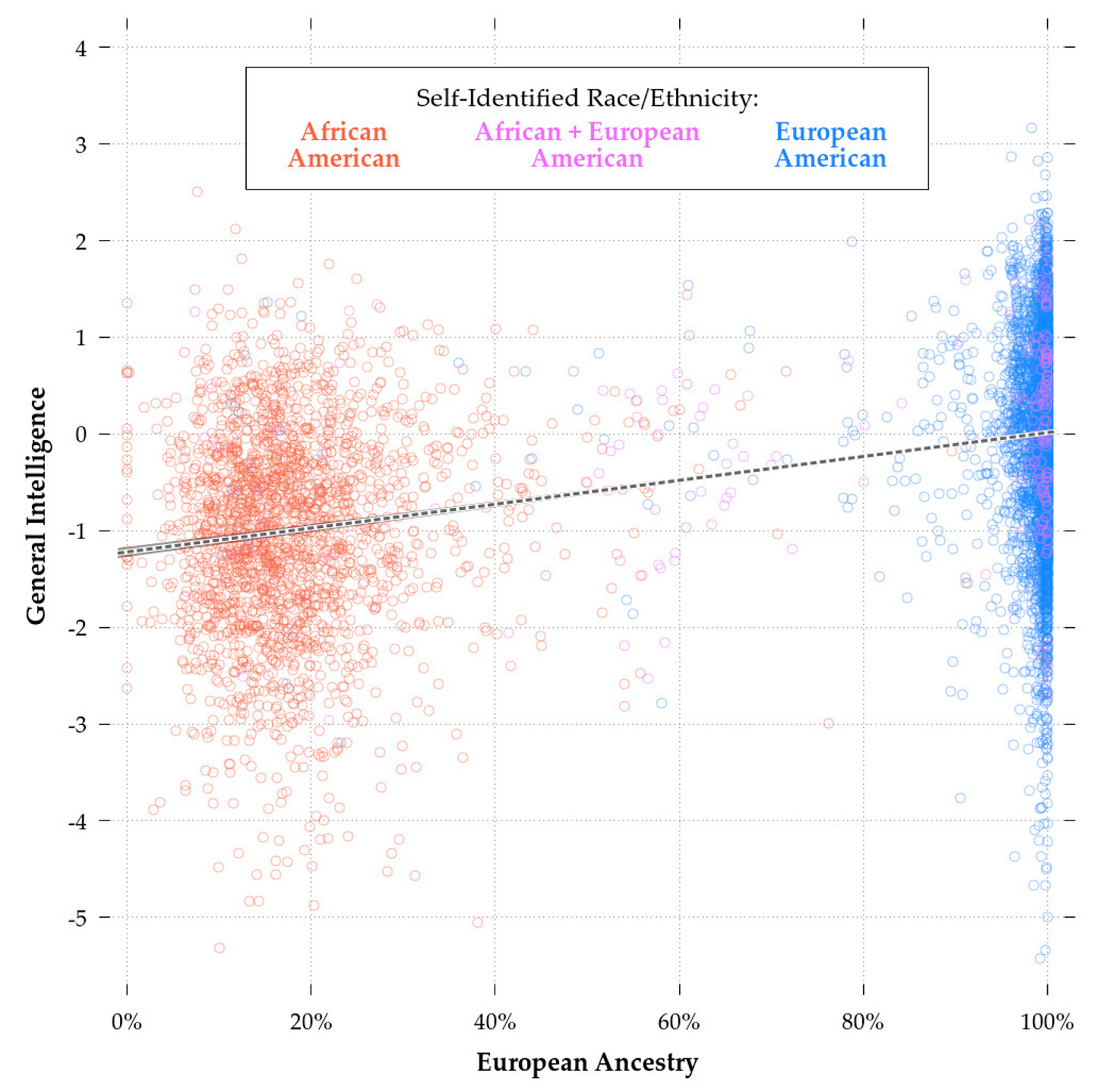

Genetic Ancestry: Individual ancestry estimates were calculated using standard admixture methods applied to genome-wide genotype data.10 The primary variable of interest was percentage European ancestry for each participant.

Self-Reported Race: Parents identified participants’ race using standard NIH categories. This allowed comparison between social classification and genetic ancestry as predictors.

Skin Pigmentation: Skin pigmentation was estimated from non-identifying genetic markers using published prediction algorithms widely used in biomedical and forensic genetics.11 The purpose was to evaluate hypotheses attributing cognitive differences to discrimination linked to visible phenotype.

Socioeconomic Status: The dataset contained limited socioeconomic measures. Parental education served as the available proxy. Although imperfect, parental education is commonly used in developmental research and correlates strongly with cognitive outcomes.12

Analytic Strategy: Multiple regression models estimated the association between cognitive scores and four predictors simultaneously: genetic ancestry, self-reported race, pigmentation, and socioeconomic status. This approach isolates the statistical relationship of each predictor while holding the others constant.13

No novel statistical techniques were introduced; the models followed standard practice in behavioral and genetic epidemiology.

Results: Genetic ancestry showed a statistically significant association with cognitive ability after controlling for race, pigmentation, and socioeconomic status. Self-reported race and pigmentation did not independently predict scores once ancestry was included. Socioeconomic status remained associated with cognitive ability, but the effect was weaker than that observed with genetic ancestry.

Substantial overlap existed between individuals, but the mean trend increased monotonically with ancestry across the full range of the sample.

Interpretation: The paper interpreted the findings cautiously. The results were described as consistent with the possibility of a partial genetic contribution to group mean differences, while explicitly noting alternative explanations and the need for replication.14

The study did not claim determinism, exclude environmental influences, or make policy recommendations. It reported a statistical association and evaluated competing hypotheses within the limits of the available data.

The reaction

Subsequent objections rarely focused on the statistical model itself. Instead, discussion centered on interpretation and potential social consequences. Critics emphasized that the conclusions could be harmful, misused or ethically problematic.

The controversy therefore did not primarily concern whether the analysis followed conventional methods, but whether the question should have been investigated.

Scientific disagreement is routine. Methods, assumptions and interpretations are constantly contested. Normally, such disputes are addressed through replication, critique and additional data. In this case, the dispute moved outside that process. Professional sanction followed publication rather than refutation of the results.

The significance of the episode lies less in whether the study was correct than in what it reveals about the boundary between empirical disagreement and professional acceptability. If some results are treated as intolerable independent of methodological error, the structure of inquiry changes. Certain hypotheses become costly to test regardless of evidentiary standards.

The cost extends beyond any individual researcher. When some findings carry consequences unrelated to methodological quality, the published record no longer reflects only evidentiary weight. Absence can be interpreted as disconfirmation even when it reflects avoidance.

The next part of Condemned returns to the broader evidentiary landscape. The overriding question is not whether the data are complete; they never are. The question is how institutions respond when incomplete data point in uncomfortable directions…

Bryan Pesta is a Cleveland-based writer and former tenured professor whose work spans both academic research and fiction.

Become a free or paid subscriber:

Like and comment below.

I was not the editor of this journal at the time of publication and was not involved in its acceptance decision.

National Institutes of Health, Genomic Data Sharing Policy (2014).

Prof Pesta, I had your class at CSU and remember it fondly. You talked about race and IQ in a professional way. I've done my share of activism and I'm now an entrepreneur with a beautiful family. Thank you for everything. Just bought your book.

If you write a review of Pesta's book on Amazon, limit the content of your review carefully to avoid tripping Amazon's "Community Guidelines." The Community Guidelines are enforced very strictly around this topic. I wrote a short review, very moderate in tone, but it was rejected on that basis.